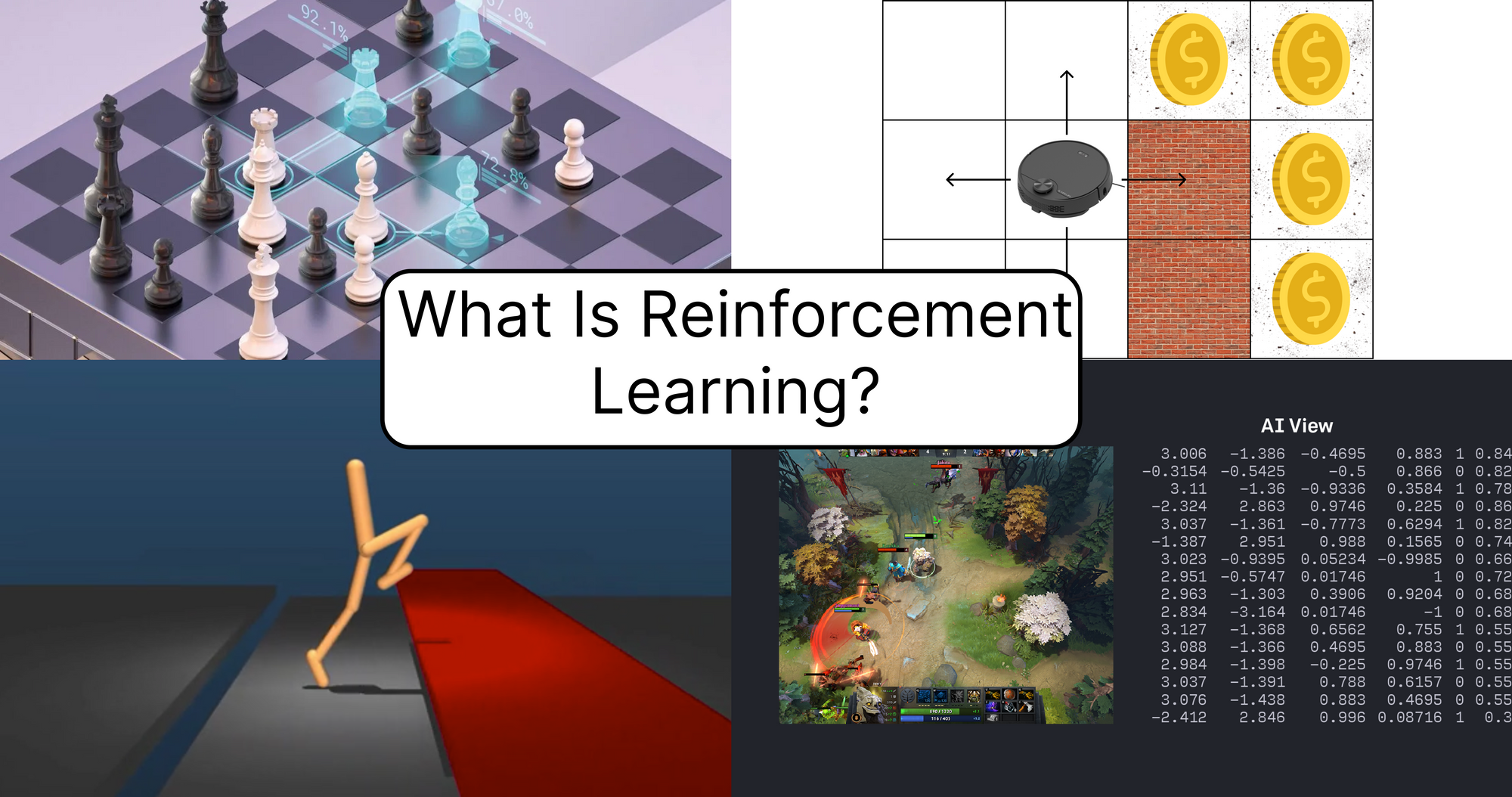

What Is Reinforcement Learning? 🤖

Reinforcement Learning (RL) is an area of Machine Learning where the model is trained to make a sequence of decisions under different conditions.

Let’s see an example:

Let’s imagine that we have a robot vacuum that cleans the floor in the apartment. For the robot to have an effective job, it should think about how to efficiently clean the apartment in the least amount of time. The better the robot does, the better it is for you. What do you think are the best actions, in general, to clean efficiently all the floor?

The robot should be able to recognize where are the walls in the apartment are, to not hit every wall on its way, more than that, it should remember where it already has been to not repeat the same journey in a loop.

Now comes the biggest question, how do we learn a model to calculate all this things? We can generate data but it will take a lot of time and patience to label it. I didn’t divided the apartment into squares with no purpose, here comes the Reinforcement Learning, as I wrote above, with this technique we can train models to make a sequence of decisions, depending on the current state, where in our case, each square is a different state. One more thing, we do not have to generate data here, we just need an environment which will generate data for us and the robot will learn by making mistakes. We will help him by showing what is right and what is not.

This is one of the things that differences RL from other techniques of training ML models, here we do not have to wait till we have a big labeled data set, we just have to create an environment.

Reinforcement Learning Basic Concepts

Agent - The object that does all the action, in our case it is the robot.

Environment - The physical world where the Agent exists.

State (S) - Current situation of the Agent (robot is in square 5, has a wall in front of it and dirt on itsleft). This state is changing after almost each action that Agent does.

Actions (A) - Possible actions of the agent, in our case to go in 4 direction (front, back, left, right).

Reward (R) - Feedback from the environment for getting into a state.

Policy (π) - The current algorithm with which the Agent completes his tasks, in our case current algorithm that robot uses to decide which actions to do on specific states.

Value (V) - The reward that Agent would receive on average while in a specific state by continuing following the current policy. (the higher the better)

Let’s understand the order of the things that happen inside an environment.

The Algorithm

- Agent is placed inside an environment;

- Based on the current state, the Agent decides what to do using current policy; (policy based on the current state says what is the best action to take)

- Agent does the action, moves to new state, receives the reward from the new state; (with the help of reward, agent can understand whether the move was worth it or not)

- New Reward changes the behaviour of the agent in order to maximize its efficiency. (high reward means the action was a good one, and vice-versa)

- New state becomes the current state, repeat the actions from step 2, 3 and 4.

How RL solves the problem

Because we have an environment, we can generate what would be the reward if the Agent makes an action in a specific state, now by seeing is it a good reward or bad reward, we could explain (by using some loss function) to our agent that it should or should not continue doing this action in a state like this one, and after many many epochs of training, the Agent (robot) will understand how to maximize his reward and why specific states require him to do an action while other states require him to do other actions to get the best reward.

Actually there are lots of algorithms on RL how to train the Agent, I will not touch these algorithms in this article, but I can list some of them so you know what you can continue reading after this article.

Famous RL Algorithms:

- Policy Iteration

- Monte Carlo Methods

- Q-learning

- Deep Q-learning

- SARSA

History of Reinforcement Learning

Actually there is a great and very detailed article on this topic explaining how reinforcement learning was tested firstly on animals, so I will better link it here and tell more about the achievements of RL from present day.

One of the fields where RL is used is game development to create intelligent bots that are capable of competing against humans or even play better than them. It actually started more with chess but already for a long time humans have lost their superiority in this game due to powerful RL agents.

We will speak about Dota 2, which is a team game, where you play 5 v 5 and you have to destroy enemy base in order to win, the game is pretty hard and complicated with lots of variables that are hard to manage even for humans and only players with 10k hours+ or so can understand the majority of mechanics used in the game. While humans need lots of hours to master the game, the bots need few hours, because in these hours bots can run tenths of thousands of games on different computers fastly obtaining a high level skill for the game.

In 2019 OpenAI 5, the RL model that was trained to play against profesional Dota 2 players won the Dota 2 world champions OG, becoming the first AI to beat the world champions in an esport game.

Where Reinforcement Learning is used

- As I mentioned above, RL is used for playing computer games and board games, the games like Chess and Go are already an easy task for RL agents.

- In robotics and industrial automation, RL is used to make robots more efficient, may be you heard there is an Italian restaurant where you can taste a pizza made fully by a robot!

- Ads, RL agents are trained to maximize clickrate on websites making ads more suitable for specific people.

- Dialog agents (text, speech) used for customer service.

Where to start

I think the best way to start with RL is by reading the following book, as it is scitaets one of the best RL books available now.

- Reinforcement Learning-An Introduction, a book by the father of Reinforcement Learning- Richard Sutton and his doctoral advisor Andrew Barto. An online draft of the book is available here.

Conclusion

The Reinforcement Learning is a very interesting part of Machine Learning, it is not yet very developed so we may not see a lot of usages of it in the real world scenario. The key difference between RL and other methods is simple, RL has an environment where we can create the real case from where we can generate infinite amounts of data making an easy way for model to interact with the environment, looking for ways to make agent’s job more efficient. The one of the biggest downside of it being that sometimes in more harder tasks, it requires lots of iterations to become at least so efficient as a human is.